|

usr/local/lib/python3.6/dist-packages/keras/backend/tensorflow_backend.py in _call_(self, inputs)Ģ981 if py_any(is_tensor(x) for x in inputs): usr/local/lib/python3.6/dist-packages/keras/engine/training_arrays.py in fit_loop(model, fit_function, fit_inputs, out_labels, batch_size, epochs, verbose, callbacks, val_function, val_inputs, shuffle, callback_metrics, initial_epoch, steps_per_epoch, validation_steps, validation_freq)Ģ02 ins_batch = ins_batch.toarray() usr/local/lib/python3.6/dist-packages/keras/engine/training.py in fit(self, x, y, batch_size, epochs, verbose, callbacks, validation_split, validation_data, shuffle, class_weight, sample_weight, initial_epoch, steps_per_epoch, validation_steps, validation_freq, max_queue_size, workers, use_multiprocessing, **kwargs) > 1 model.fit(X, to_categorical(y), epochs=5, batch_size=32, validation_split=0.25) ValueError Traceback (most recent call last) Model.fit(X, to_categorical(y), epochs=5, batch_size=32, validation_split=0.25)Īnd I have the following error that keeps coming back: Train on 3750 samples, validate on 1250 samples Model = Model(inputs=, outputs=predictions) Predictions = Dense(3, activation='softmax')(x) X = dataset_processed]Įmbedding_layer = Embedding(vocab_size, 128)(inputs)

So I built this model : vocab_size = len(glove_wordmap)+1 I want to train my model using this transformed dataset. | 5000 | matrix 80*50 | matrix 80*50 | contradiction| 0 |Įach embedded vector and each sequence is a numpy array. I end up with a new panda dataframe : | index | sentence_1_padded | sentence_2_padded | label | target | I encoded the sentences with Glove embedded vectors ( dim=50) and then padded them with maxlen=80. To accomplish this task, I chose to use Keras which seemed relatively easy to use. The data set is formatted as follows: | index | sentence_1 | sentence_2 | label | I have read other answers around the same problem but couldn't debug my problem.I am just starting in Deep Learning and I have to implement a model in Python that can detect the inference between two sentences (label is neutral, contradiction or entailment).

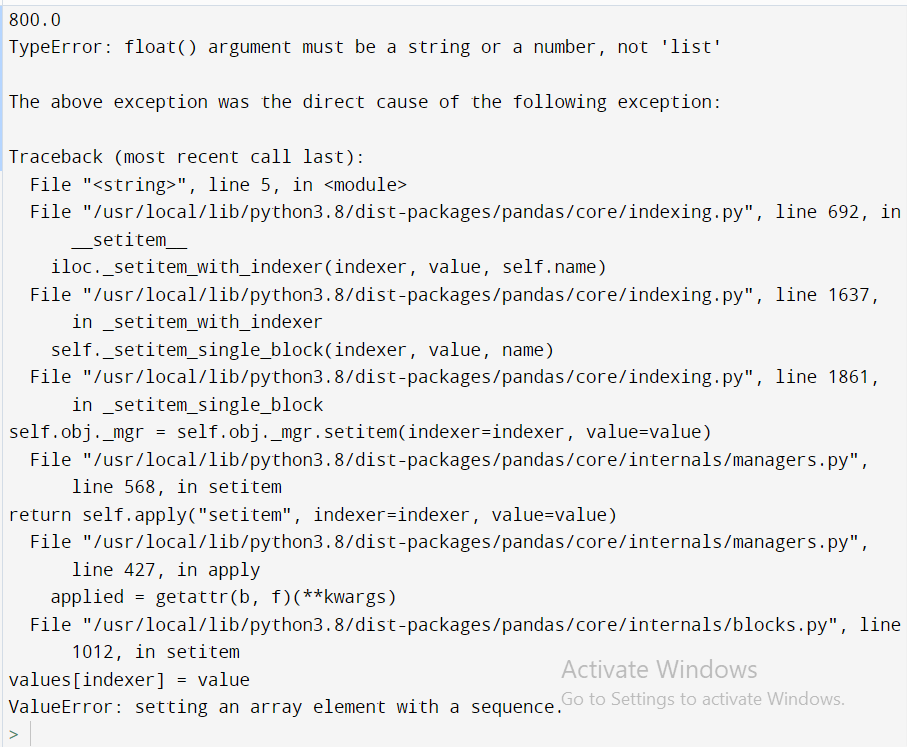

ValueError: setting an array element with a sequence. > 433 array = np.array(array, dtype=dtype, order=order, copy=copy) > 300 X = check_array(X, accept_sparse='csr')Ĭ:\ProgramData\Anaconda3\lib\site-packages\sklearn\utils\validation.py in check_array(array, accept_sparse, dtype, order, copy, force_all_finite, ensure_2d, allow_nd, ensure_min_samples, ensure_min_features, warn_on_dtype, estimator) > 1336 return super(LogisticRegression, self)._predict_proba_lr(X)ġ338 return softmax(cision_function(X), copy=False)Ĭ:\ProgramData\Anaconda3\lib\site-packages\sklearn\linear_model\base.py in _predict_proba_lr(self, X)ģ36 multiclass is handled by normalizing that over all classes.Ĭ:\ProgramData\Anaconda3\lib\site-packages\sklearn\linear_model\base.py in decision_function(self, X)Ģ98 "yet" % ) > 5 preds = m.predict_proba(test_x)Ĭ:\ProgramData\Anaconda3\lib\site-packages\sklearn\linear_model\logistic.py in predict_proba(self, X)ġ334 calculate_ovr = ef_.shape = 1 or self.multi_class = "ovr"

ValueError Traceback (most recent call last)ģ model = linear_model.LogisticRegression(C=4, dual=True)Ĥ model.fit(train_x, list(train)) I have a muli-label classification problem and train represents one class at a time to train an LR model for multiple labels.īut I get the following error:. Model = linear_model.LogisticRegression(C=4, dual=True) I am trying to train a logistic regression model in SKlearn with the following code: preds = np.zeros((len(test), len(label_cols)))

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed